Making research insights easier to find in Maze

Solution:

Maze Search, an AI-powered discovery system that enables teams to retrieve insights across their organization’s knowledge base.

Role:

Sole product designer, leading discovery, concept validation, and end-to-end design in close collaboration with Product and Engineering.

Maze is an all-in-one research platform that helps designers, researchers, and product managers rapidly collect, analyze, and incorporate user feedback across the product life cycle.

As teams run more studies, they accumulate a growing body of customer knowledge from interviews, surveys, and usability tests. Over time, this repository becomes a rich source of insights about customers and product decisions.

As research accumulates, organizational knowledge becomes harder to access.

Unless someone already knows which studies exist and where to look, critical insights remain invisible. Teams spend time manually browsing past projects, slowing decisions and leading to repeated work.

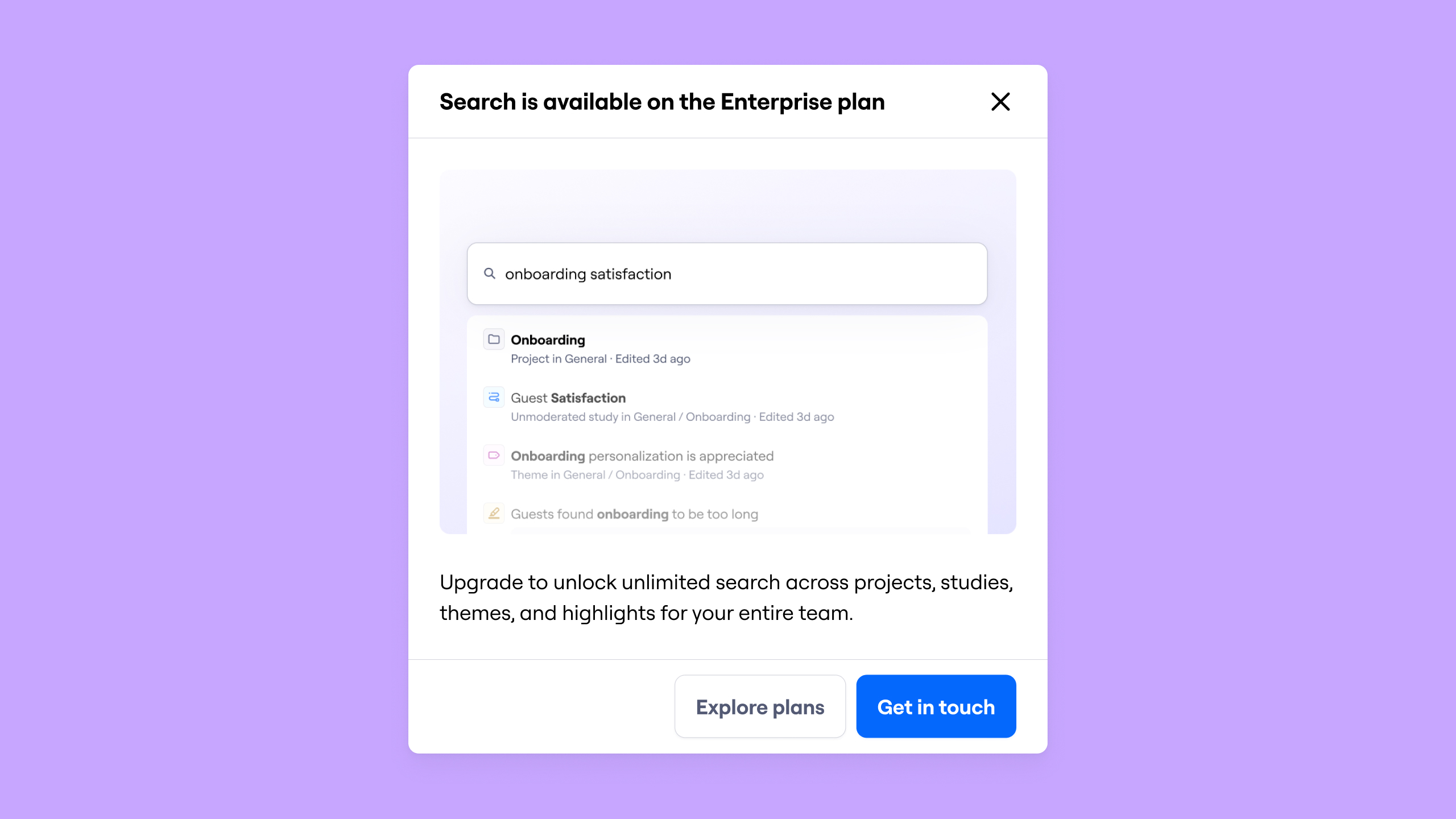

This is amplified for Enterprise customers, where multiple teams run research with limited cross-team visibility.

The organization has the knowledge, but it isn’t easily discoverable.

How might we make accumulated research discoverable at scale across studies and teams?

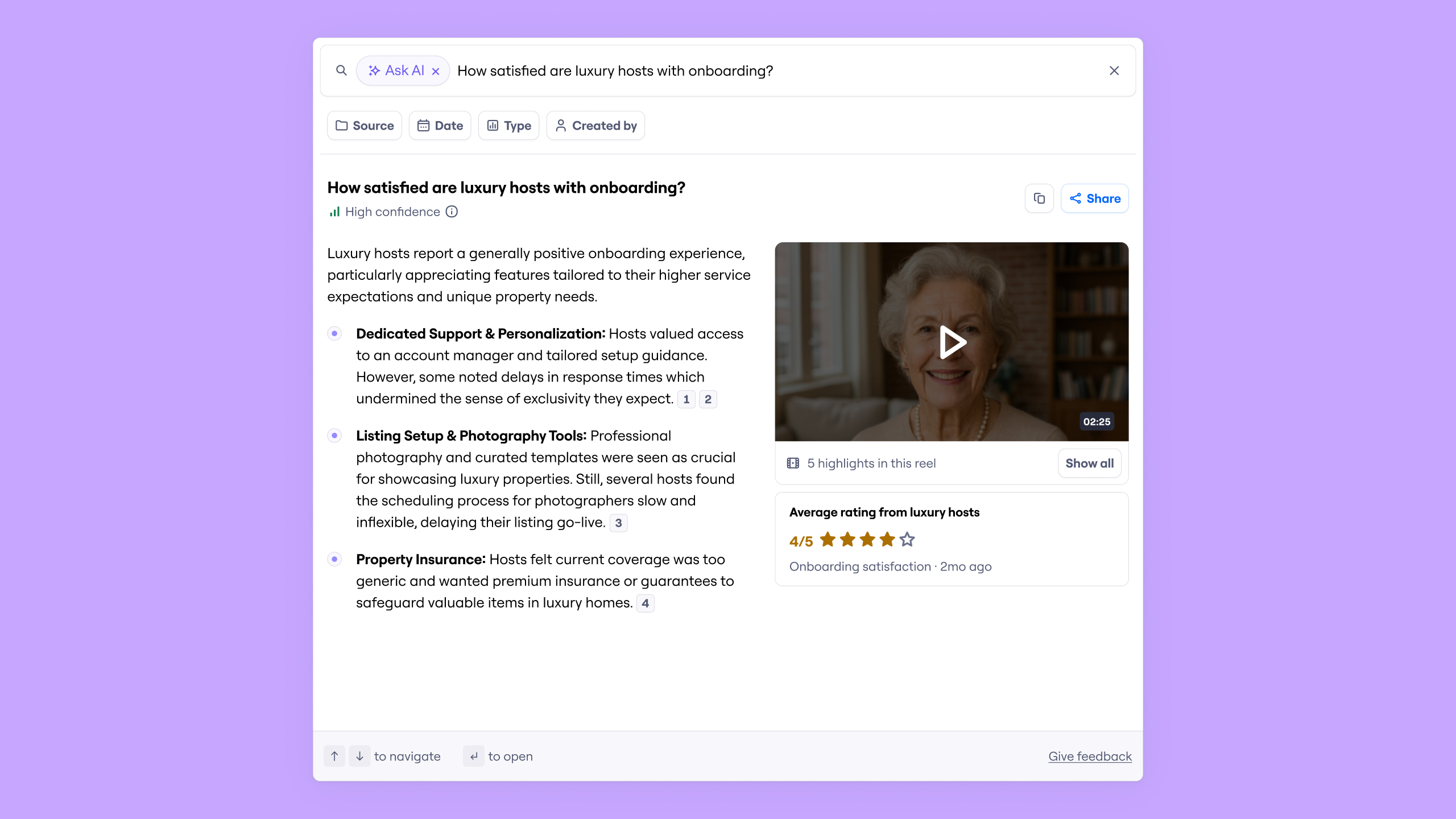

We knew early on that Search and Ask AI could unlock accumulated research inside Maze.

Before moving into solution design, the team aligned on key risks around relevance, trust, and governance. To validate those assumptions, we conducted concept interviews using an interactive prototype.

Through these conversations, we learned:

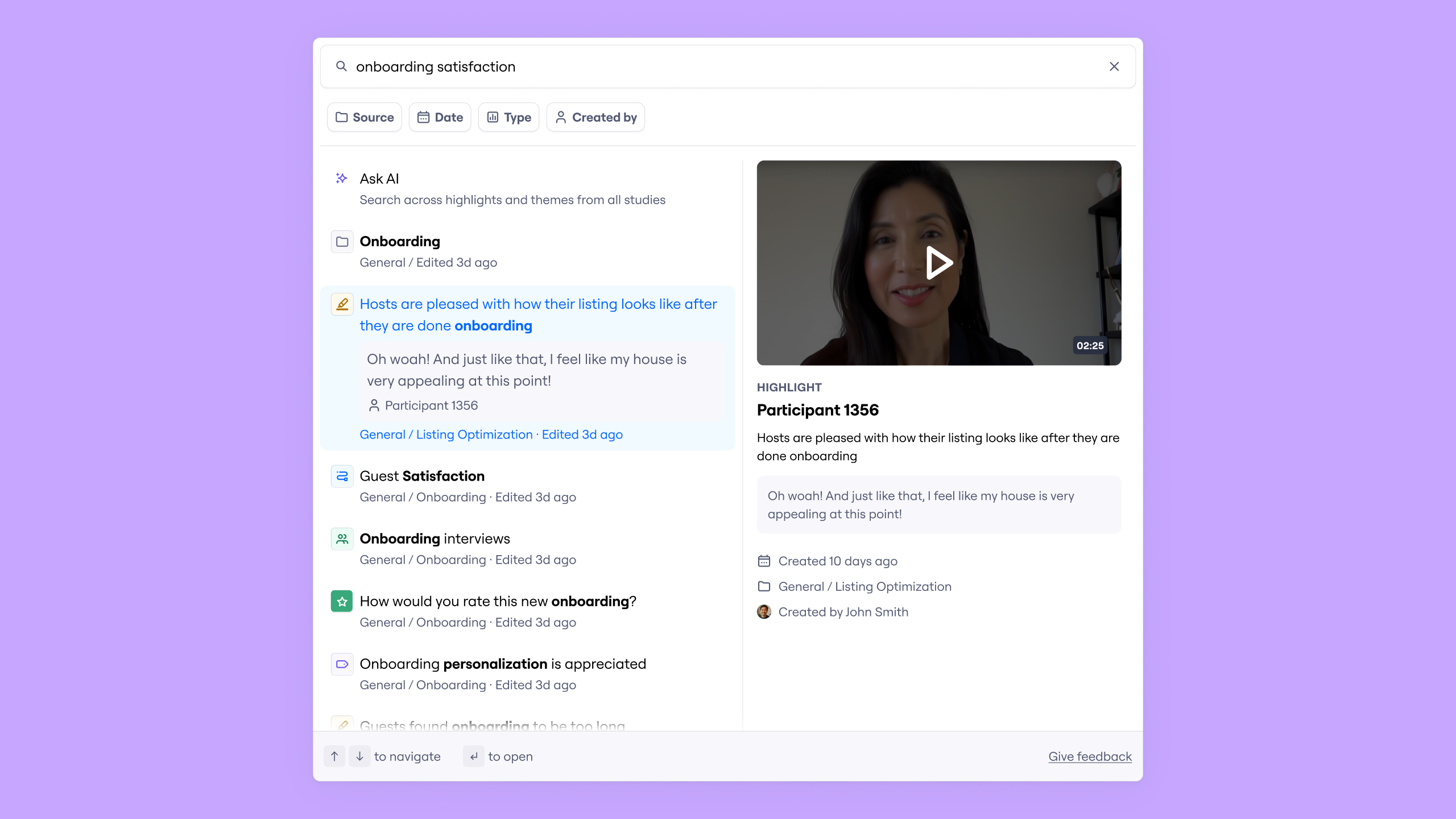

- Discovery requires structured results: Customers saw immediate value in cross-study search, but clarified what needed to appear in each result — including recency indicators, origin context, and meaningful filters.

- Trust depends on traceability: AI summaries were only valuable if they linked directly to source evidence. Citations, video timestamps, and quantitative elements significantly increased confidence.

- Stakeholder access introduces the need for guardrails: Researchers supported self-service, but expressed concern about insights being taken out of context. Clear framing and guardrails were important to reduce cherry-picking.

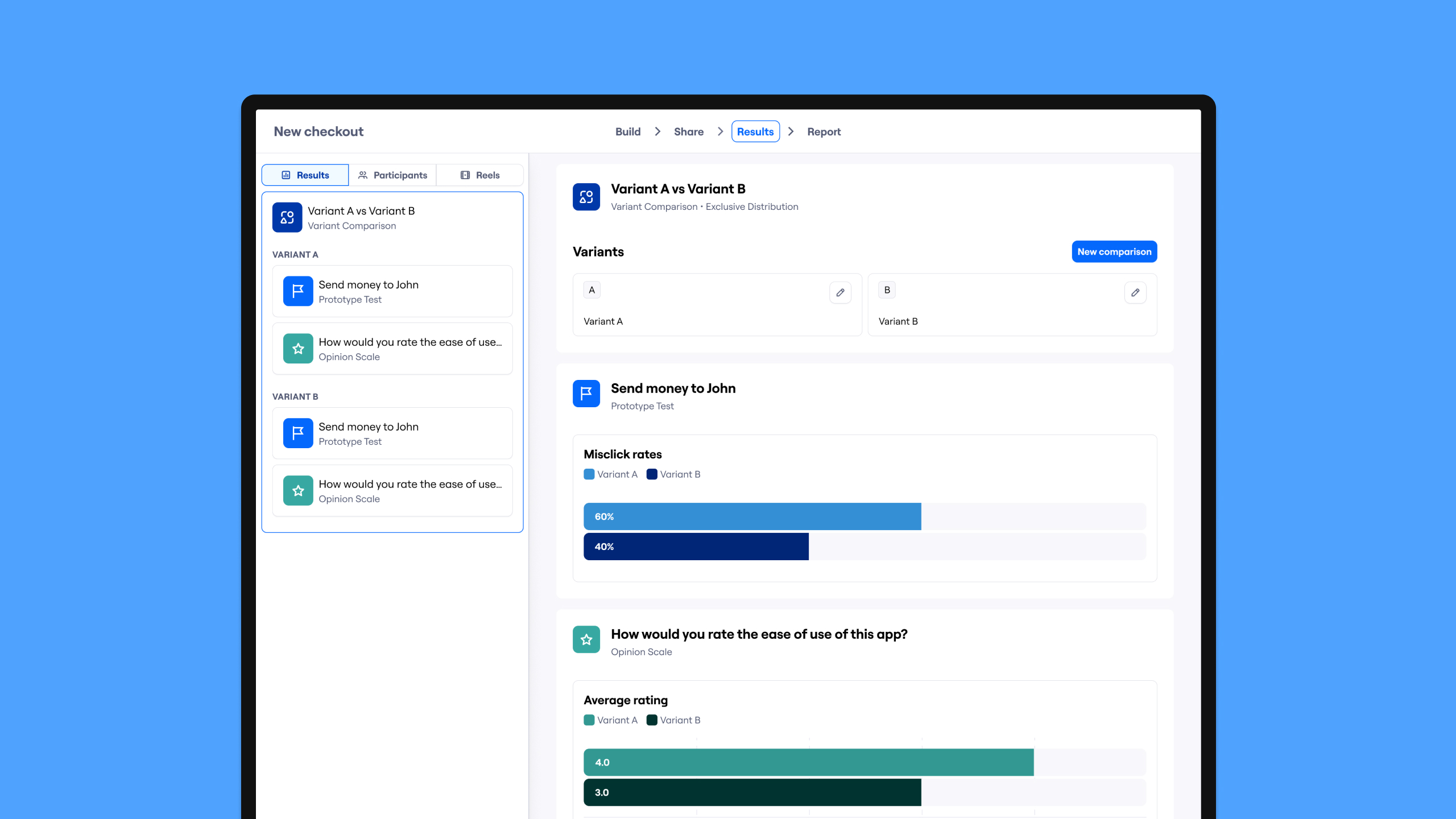

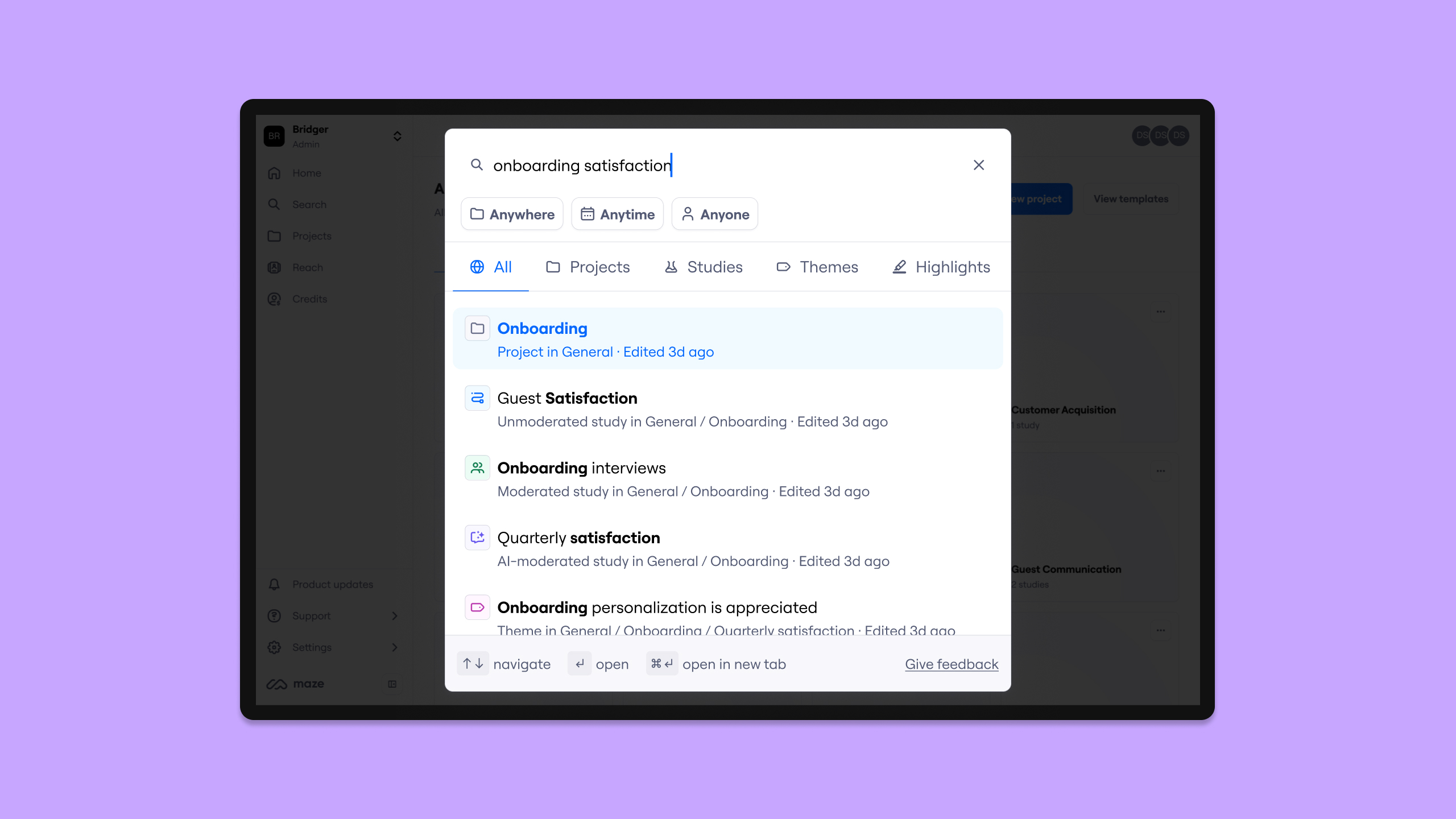

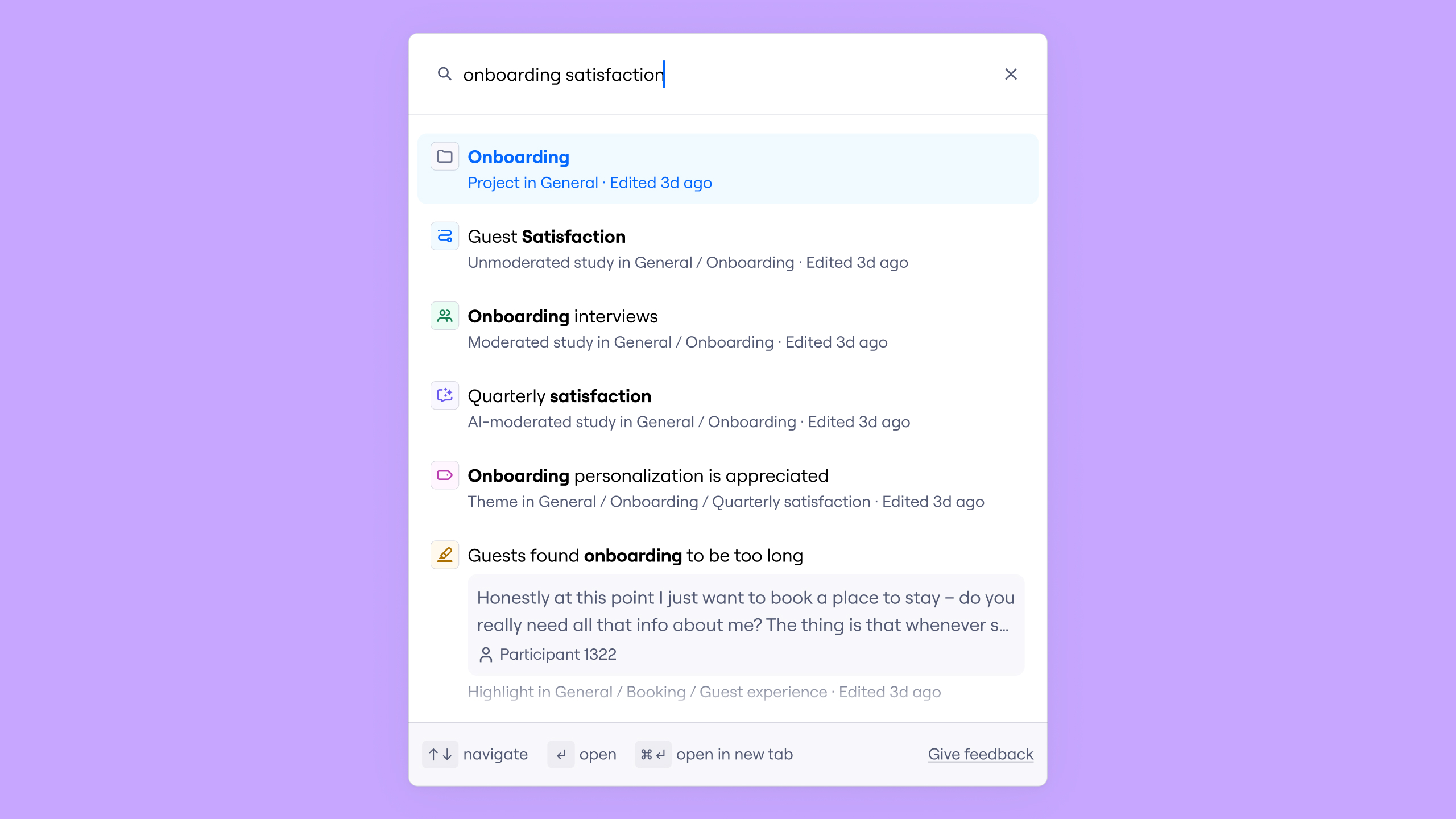

Rather than building the full vision at once, we started with a focused Find MVP, supporting both keyword and semantic search. This allowed us to validate real search results with customer data and iterate quickly based on feedback.

We then introduced Filters, including item type, date, source, and creator, to help teams narrow results with context.

We intentionally deferred Ask AI, richer result previews, and access control to later iterations, prioritizing reliable retrieval first.

The Find MVP focused on reliable retrieval.

Each result was designed to clearly communicate its type, what makes it unique, where it lives, and how fresh it is.

We surfaced discriminators such as item type, source location, and recency indicators to help users quickly assess relevance before exploring further.

We shipped the MVP with a continuous feedback survey to capture early signals and guide iteration.

After validating core retrieval, we focused on enabling more precise result refinement.

I designed filters for item type, date, source location, and creator. These controls helped teams quickly refine large result sets and focus on relevant artifacts.

Find reduced dependency on manual browsing by introducing a centralized discovery layer. Researchers could access accumulated knowledge faster, shortening time-to-insight and improving reuse of existing findings instead of rerunning research.

The experience also lowered friction for stakeholders seeking evidence, increasing cross-team visibility and strengthening Maze’s positioning against dedicated repository tools. Early feedback indicated that insights were significantly easier to find.

Most importantly, this work laid the foundations for a future where Search and Ask AI work seamlessly with data analysis, enabling teams to synthesize new insights directly from their accumulated research.